What is a bisect on your source history?

Git’s bisect command is extremely powerful and I won’t be covering it here. However git describes its feature as a way to:Find by binary search the change that introduced a bug

Why do I need to look through source code history to find why a bug was introduced?

It’s true, that many bugs are so basic that once you hear about the bug you immediately understand where it is, why it’s broken and how to fix. In that scenario this approach is not something you need.However, if you know a bug was introduced sometime in the past but are not sure when or how it was introduced, I think we could all agree that doing a binary search through the history of your code’s changes is a pretty good approach to finding the specific change-set that introduced a bug. Once you have a handle on the specific code change that was made, it becomes much easier to understand how it changed and track down the reason a bug was introduced and how to fix it.

High level steps/concept:

- First you should have discovered a reproducible bug

- Next we have to find a commit in the past where we know the bug does not exist. (Say you know that 3 weeks ago, this bug didn’t exist.)

- Now, from that “good” commit we do a binary search through source history to find when the bug was first introduced. Noting at each commit its goodness/badness state and continuing with the search until we’ve found the commit when the bug was introduced.

- Analyze the commit until you understand what and how the bug was introduced and fix it.

One manual approach to TFS bisect.

There is not a built-in feature with TFS (that I’m aware of) and leaves us with some manual bookkeeping that we wouldn’t have to do if we were using git.Side Note: If you’re familiar with git, I’d recommend just using git-TFS or the new git-tf tool and just clone your TFS repro and use git-bisect to accomplish these steps.Let’s assume you can find a commit in the past that you know doesn’t have the bug.

Load up PowerShell and CD into the root of your project directory. Execute a tf.exe command to pull a string output of your history into the clipboard. We’ll leverage this in our bookkeeping.

I’m using PowerShell and have tf.exe on my %PATH%.Notice the pipe to the ‘clip’ command at the end of the TF call. This places the output of one command into the clipboard.

>tf history ./* /recursive /noprompt | clip

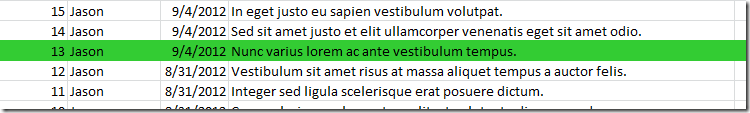

Let’s say the above command places the following into our clipboard.

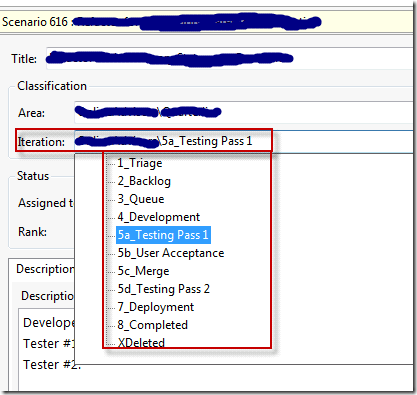

Take the output of the command (that is now in your clipboard) and paste it into Excel (or notepad) wherever you want to keep track of your work.

We know that at commit ID #13 the bug did not exist. Let’s mark it as ‘good’

Now we start our binary search through the different commits to find our bug.

Find a midway commit between this commit (#13) and the most recent commit (#79).

You don’t have to be all mathematical about the binary search, I tend to just eyeball the ‘middle’ and go from there. But you’re more than welcome to execute the binary search perfectly.

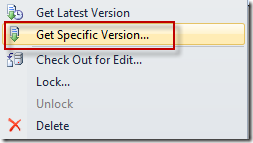

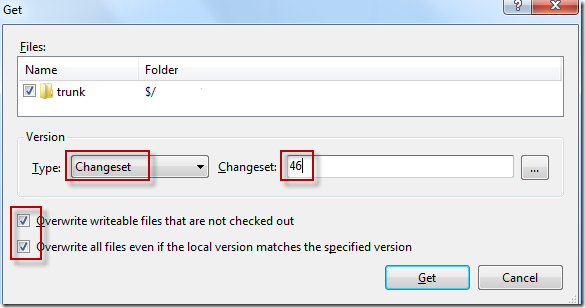

Now use your TFS tools to checkout this specific version. In this case we’ll checkout commit #46.

I tend to prefer the command line to check out the specific version as it’s easier to repeat these steps with commands and we already have the command open from earlier.

>tf get ./* /recursive /force /overwrite /version:46Or you can use the GUI to get a specific version.

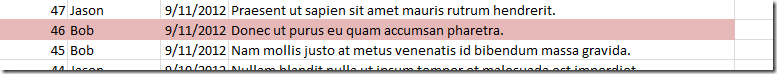

With version #46 checked out, we run our tests and find that the bug exists here. Mark it as ‘bad’ to signify the bug is here.

Now we can continue our binary search between commit 13 and 46 until we narrow down the exact commit where the bug first shows up.

As you can see by the numbers to the left in the screenshot above, it took us 5 checkouts to find the commit where the bug was introduced.

Now the rest is up to you. I tend to spend time looking at the diff and understanding why the specific commit introduces the bug. If you keep the size of your regular commits small then it tends to be pretty easy to understand why the bug was introduced and how to fix it.

Don’t forget to ‘get latest’ before you try to do much work so you’re not stuck with your source code way back in time.

These steps should be automated.

It’s true the bookkeeping should be done for us by a tool, and in fact I started writing a PowerShell implementation of this, but never finished and didn’t find it worth my time. The manual approach works well, and it’s not something I have to use often. However, I did find someone who’s written a tool that looks promising.http://gr3dman.name/blorg/posts/2010-12-03-tf-bisect.html

Happy bug hunting.

I love KDiff3 :)